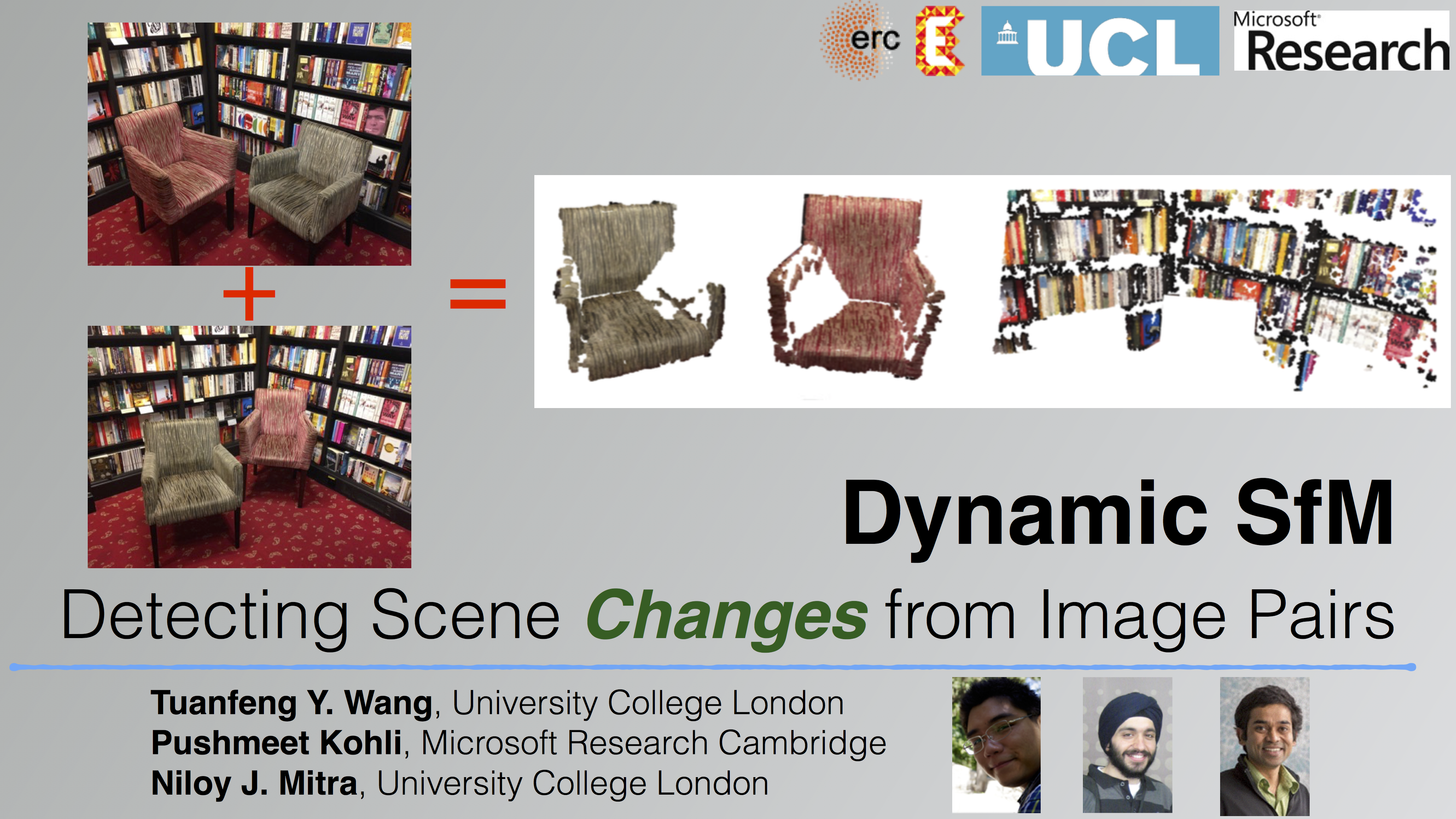

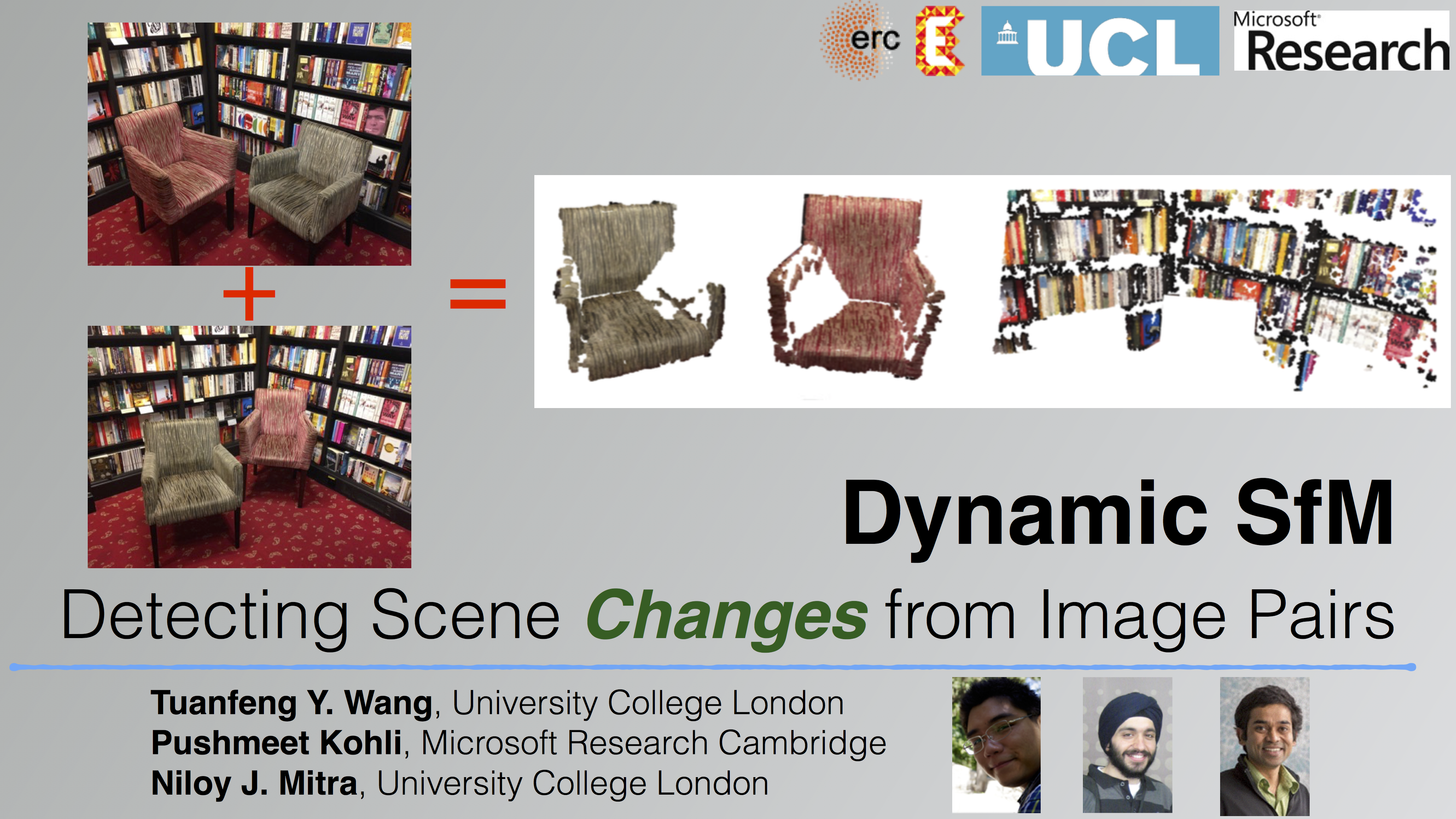

Dynamic SfM: Detecting Scene Changes from Image Pairs

- Tuanfeng Y. Wang1

- Pushmeet Kohli2

- Niloy J. Mitra1

1University College London 2Microsoft Research Cambridge

Symposium on Geometry Processing 2015

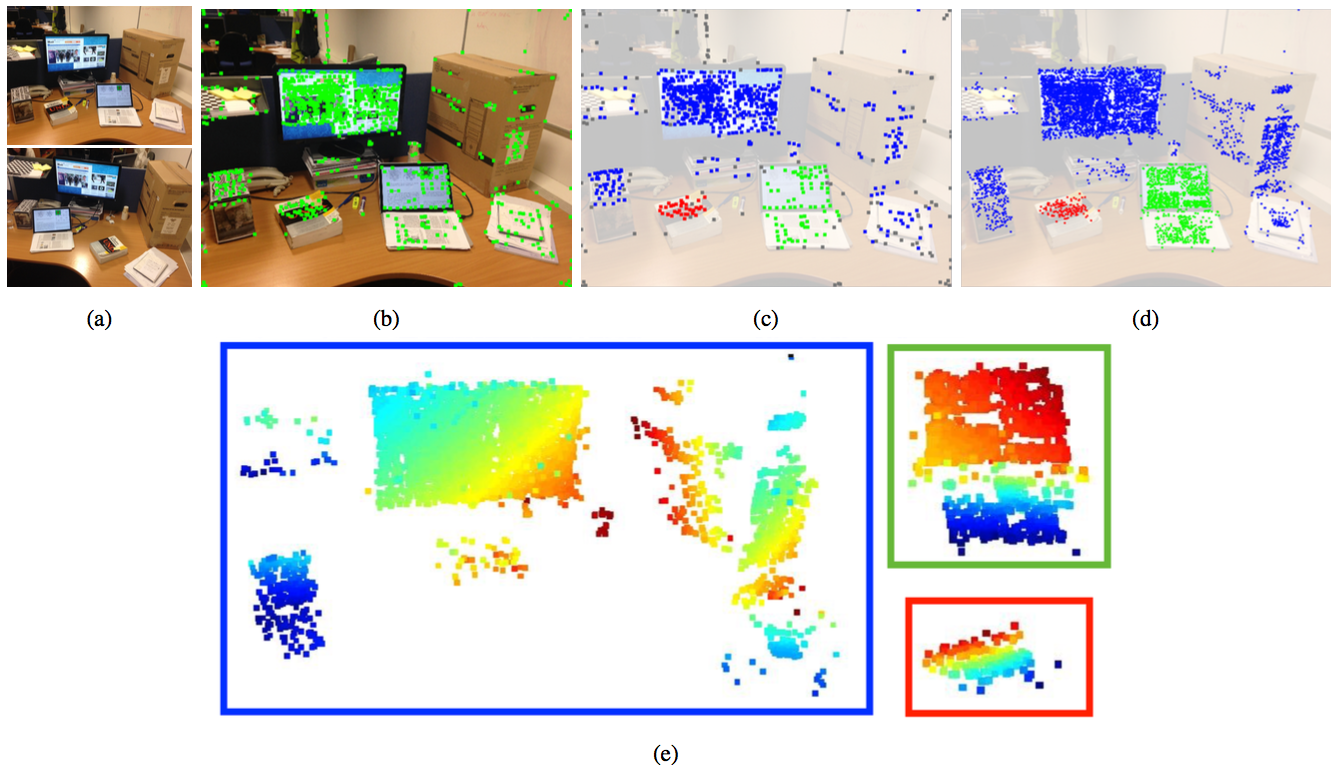

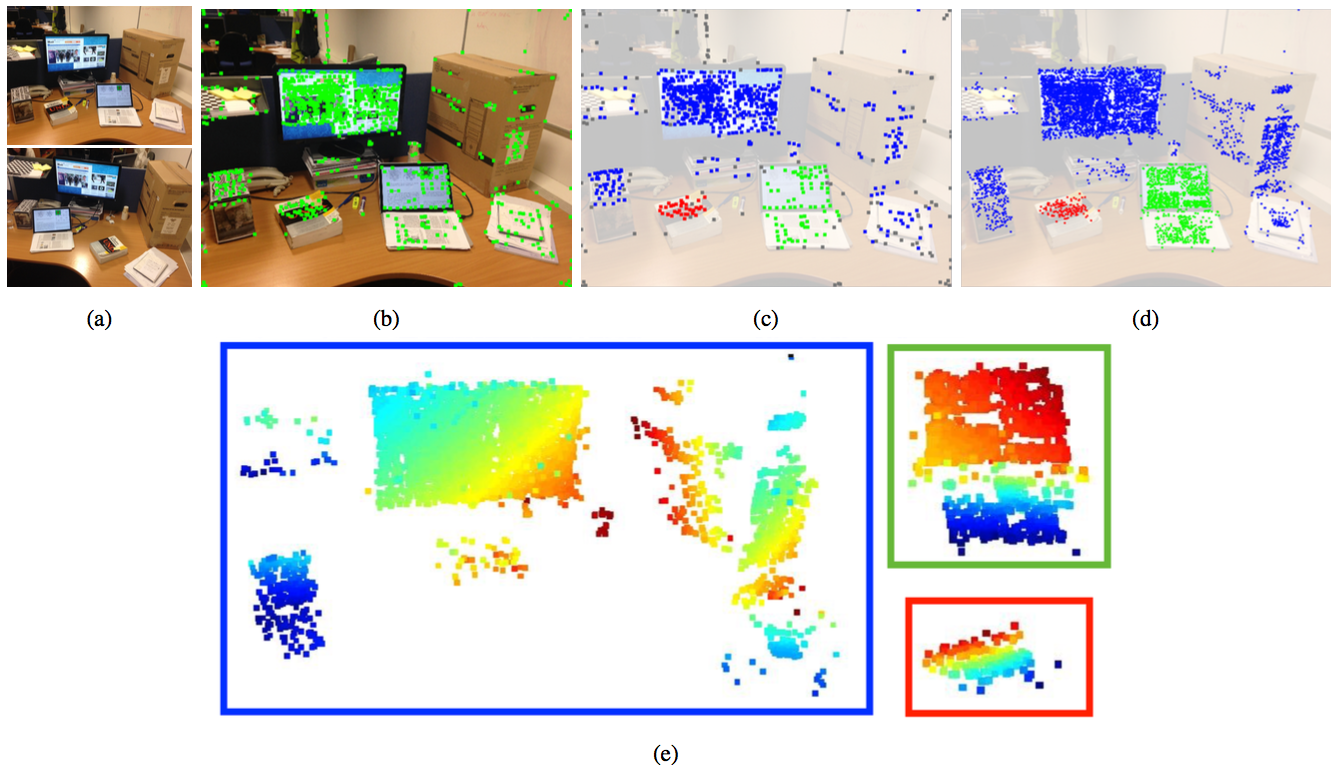

Figure 1: Using the input image pair (a), our algorithm automatically performs change detection between the two images and calculates the motion of each rigidly moving part (b) while simultaneously estimating their 3D structure to enhance performance (c). Note that due to two-view ambiguity, we have no information about the absolute depth of each object. Instead, we show the depth maps for each object separately.

Abstract

Detecting changes in scenes is important in many scene understanding tasks. In this paper, we pursue this goal simply from a pair of image recordings. Specifically, our goal is to infer what the objects are, how they are structured, and how they moved between the images. The problem is challenging as large changes make point-level correspondence establishment difficult, which in turn breaks the assumptions of standard Structure-from-Motion (SfM). We propose a novel algorithm for dynamic SfM wherein we first generate a pool of potential corresponding points by hypothesizing over possible movements, and then use a continuous optimization formulation to obtain a low complexity solution that best explains the scene recordings, i.e., the input image pairs. We test the algorithm on a variety of examples to recover the multiple object structures and their changes.

Method Overview

Figure 2: Algorithm pipeline. From two images (a) of a dynamic scene with multiple objects undergoing rigid motions. We first generate a set of candidate correspondence pairs using our pre-boosting strategy indicated by green dots in (b). In this example, 1046 correspondence pairs were generated, instead of only 554 using SIFT directly. Next, we use continuous optimization to simultaneously recover the motion of each rigid part (c) along with their coarse 3D structure (colors show assignment to the different motion groups). Finally, we use the grouping result to obtain a denser set of correspondence pairs (d). Here, 5281 correspondence pairs are generated from 832 inlier correspondence pairs obtained from pre-boosting. We show the structure of each object (e) color coded by estimated depth, distances increasing from blue to red.

Results

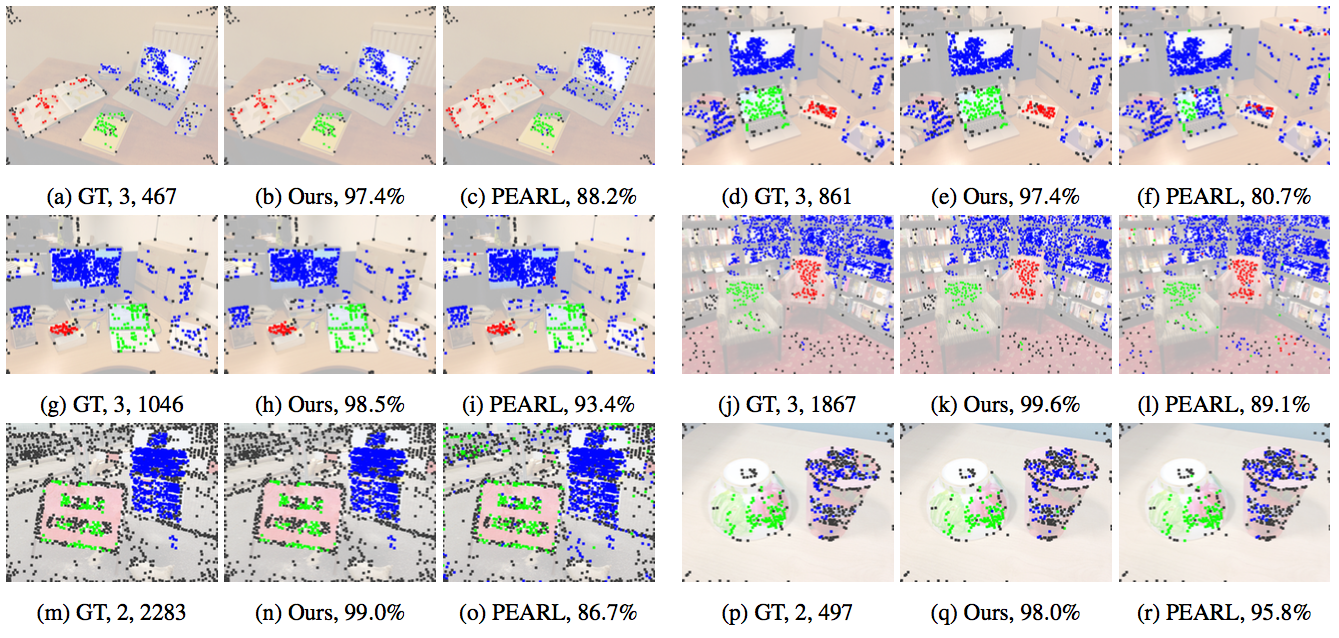

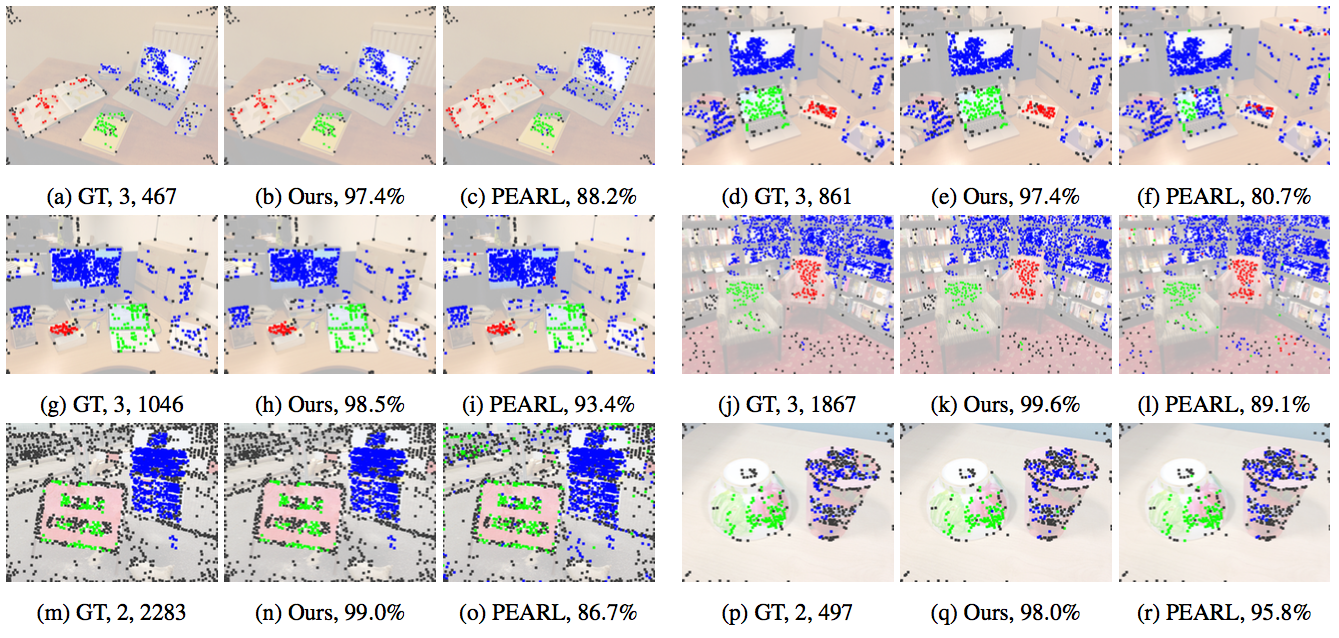

Figure 6:

Algorithm performance. Groundtruth with number of motions and number of correspondences (inlier and outlier), our result and PEARL’s result with labeling correctness. Note that the PEARL was provided with our robust initialization.

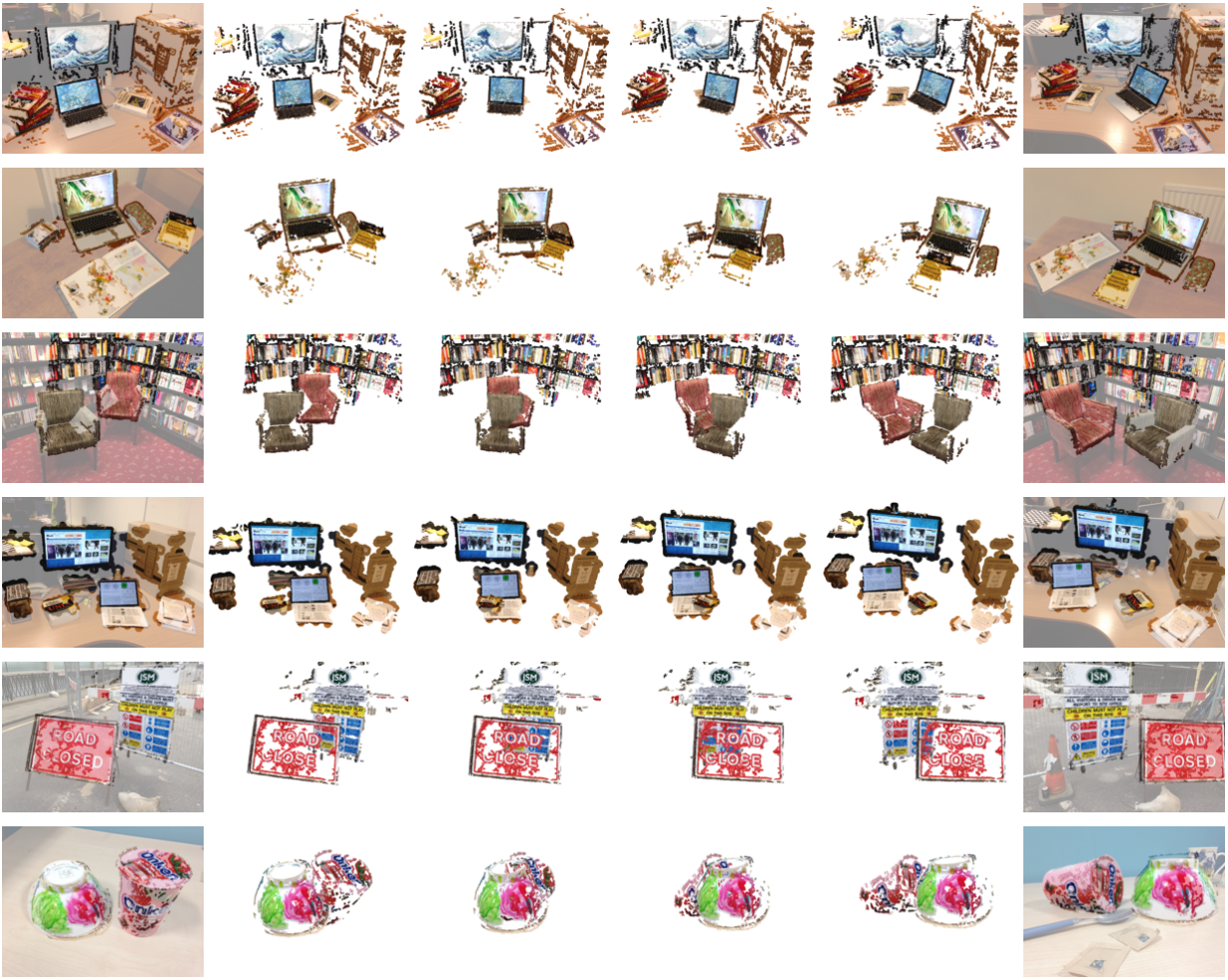

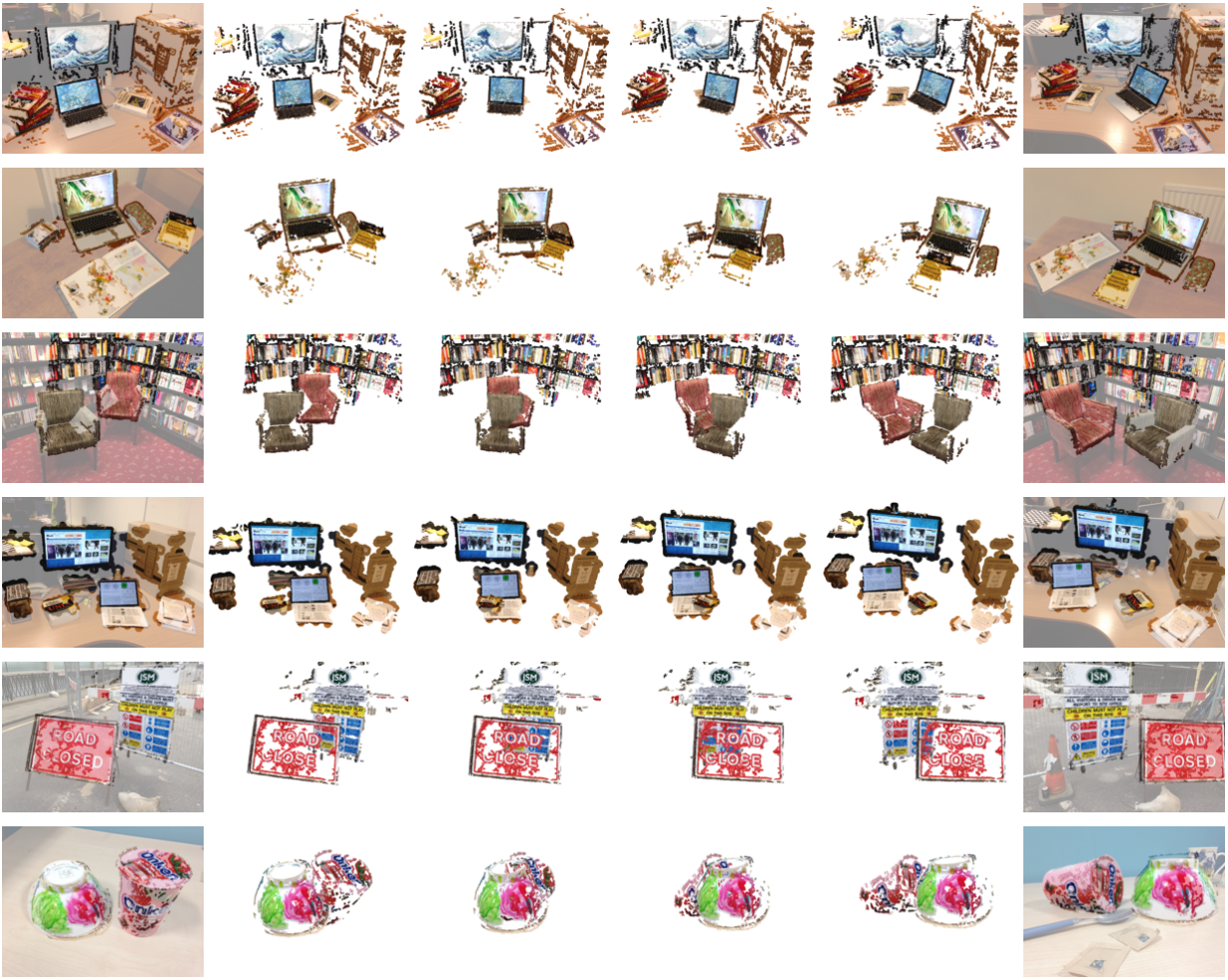

Figure 7:

Motion interpolation with our dense reconstruction from only a pair of input images (overlaid on first/last columns).

Bibtex

@article{Wang:2015,

title = {Dynamic SfM: Detecting Scene Changes from Image Pairs},

author = {Tuanfeng Y. Wang and Pushmeet Kohli and Niloy J. Mitra},

year = {2015},

journal = {{Symposium on Geometry Processing 2015}}

}

Acknowledgements

We thank the reviewers for their comments and suggestions for improving the paper. Special thanks to Aron Monszpart, Duygu Ceylan and Christopher Russell for invaluable comments, support and discussions. This work was supported in part by ERC Starting Grant SmartGeometry (StG-2013335373) and a Microsoft PhD scholarship.

Links

Paper (34MB)

Supplementary Video (ZIP 76MB)

Slides (24 MB)

Code / Data (ZIP 59 MB)

`