Unsupervised Texture Transfer from Images to Model Collections

- Tuanfeng Y. Wang1

- Hao Su2

- Qixing Huang3

- Jingwei Huang2

- Leonidas Guibas2

- Niloy J. Mitra1

1University College London 2Stanford University 3TTIC/UT Austin

SIGGRAPH Asia 2016

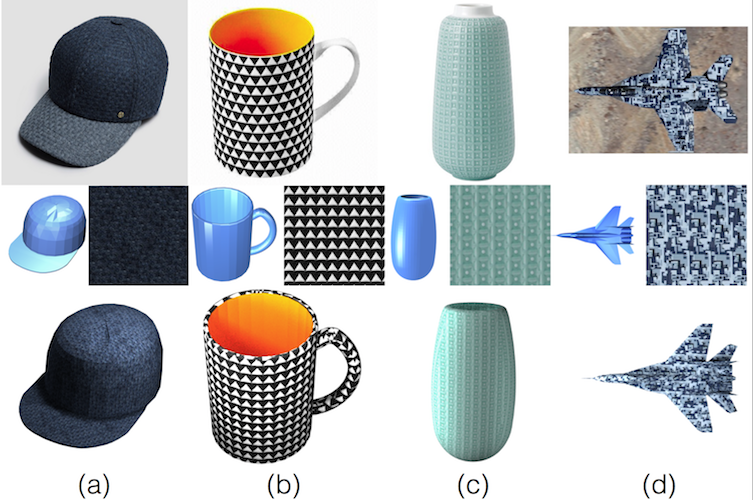

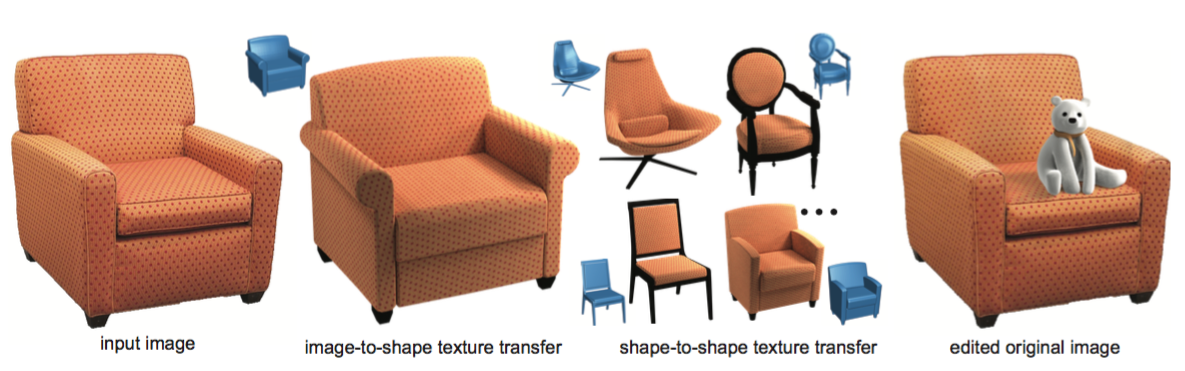

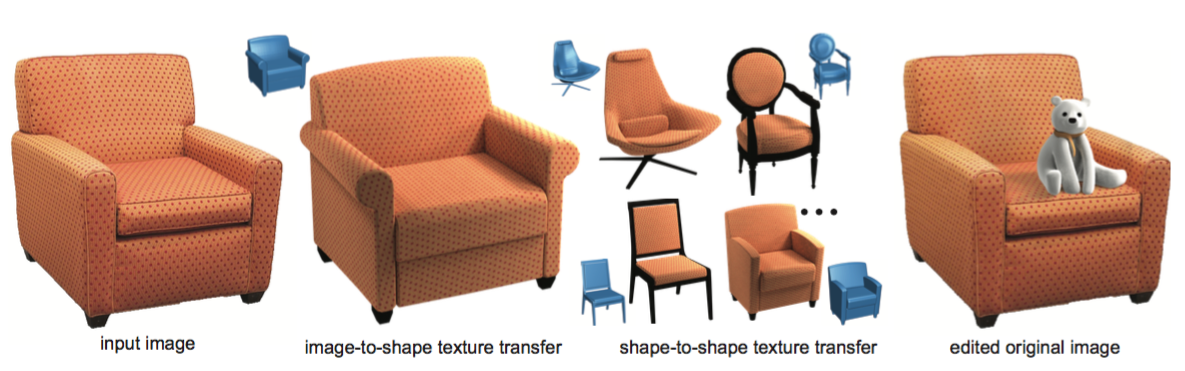

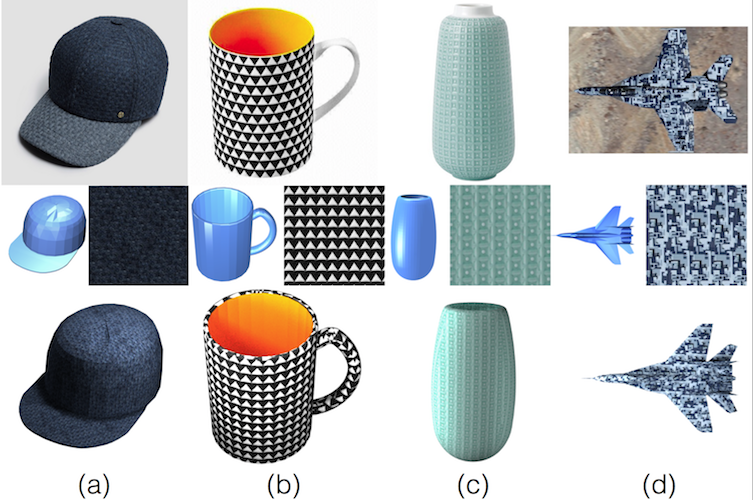

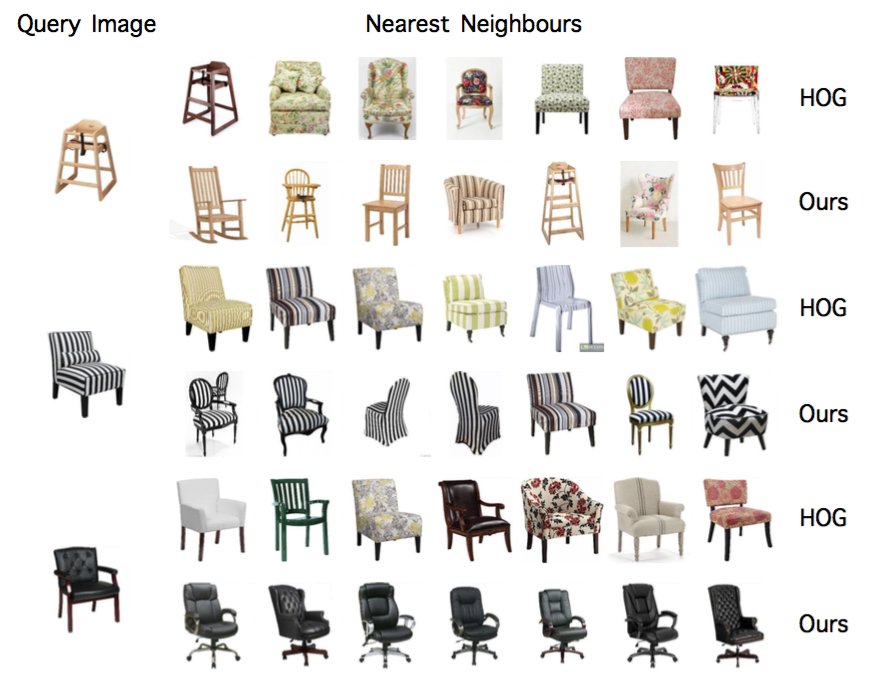

Starting from an input image and a 3D model collection, we propose an unsupervised scalable method for image-to-shape and shape-to-shape texture transfer. The method also allows novel object insertion to the original image (right). The method exploits approximate geometry priors to factorize both geometric and illumination effects. Corresponding original 3D models are shown in blue.

Abstract

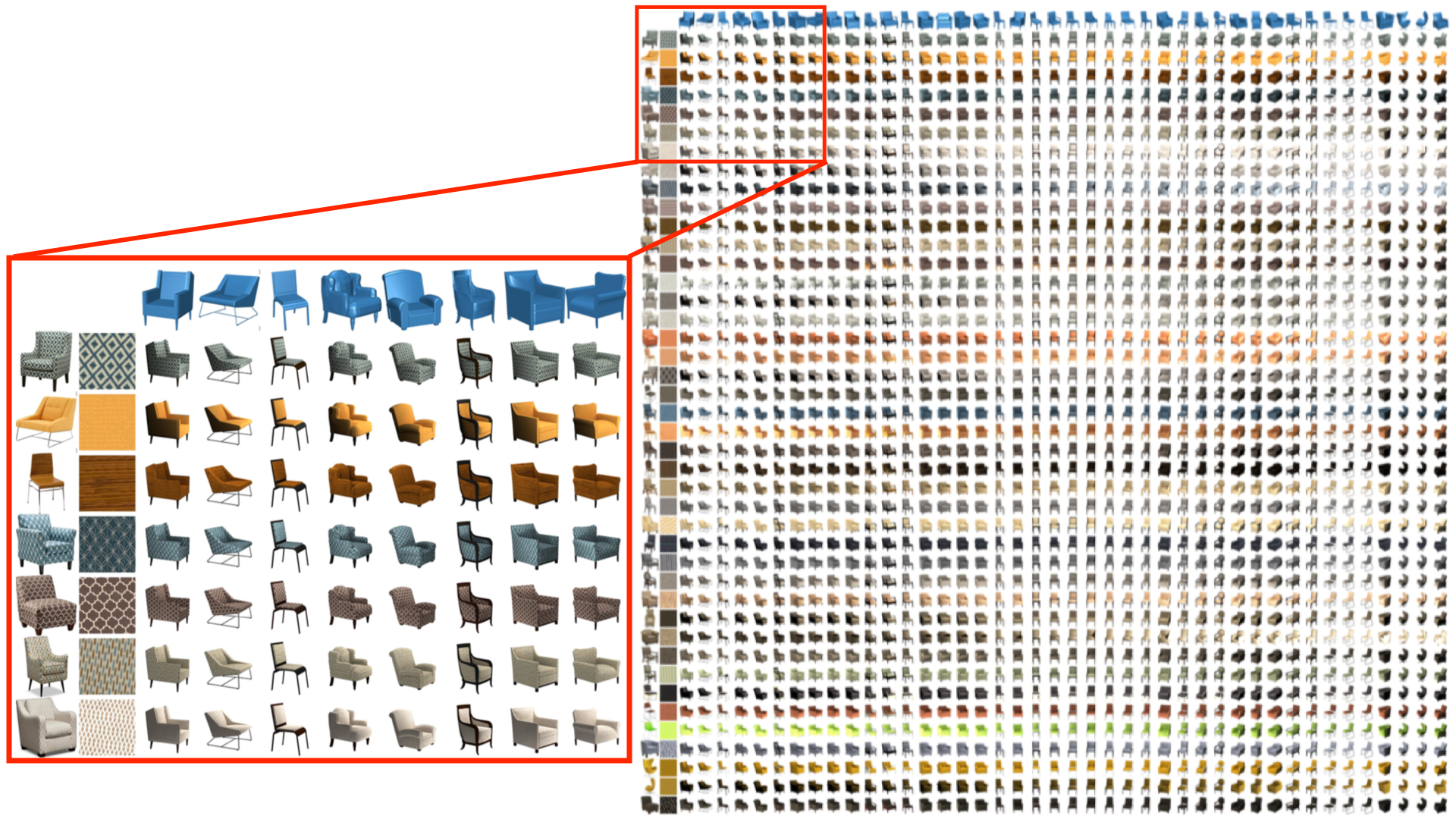

Large 3D model repositories of common objects are now ubiquitous and are increasingly being used in computer graphics and computer vision for both analysis and synthesis tasks. However, images of objects in the real world have a richness of appearance that these repositories do not capture, largely because most existing 3D models are untextured. In this work we develop an automated pipeline capable of transporting texture information from images of real objects to 3D models of similar objects. This is a challenging problem, as an object’s texture as seen in a photograph is distorted by many factors, including pose, geometry, and illumination. These geometric and photometric distortions must be undone in order to transfer the pure underlying texture to a new object — the 3D model. Instead of using problematic dense correspondences, we factorize the problem into the reconstruction of a set of base textures (materials) and an illumination model for the object in the image. By exploiting the geometry of the similar 3D model, we reconstruct certain reliable texture regions and correct for the illumination, from which a full texture map can be recovered and applied to the model. Our method allows for large-scale unsupervised production of richly textured 3D models directly from image data, providing high quality virtual objects for 3D scene design or photo editing applications, as well as a wealth of data for training machine learning algorithms for various inference tasks in graphics and vision.

Overview

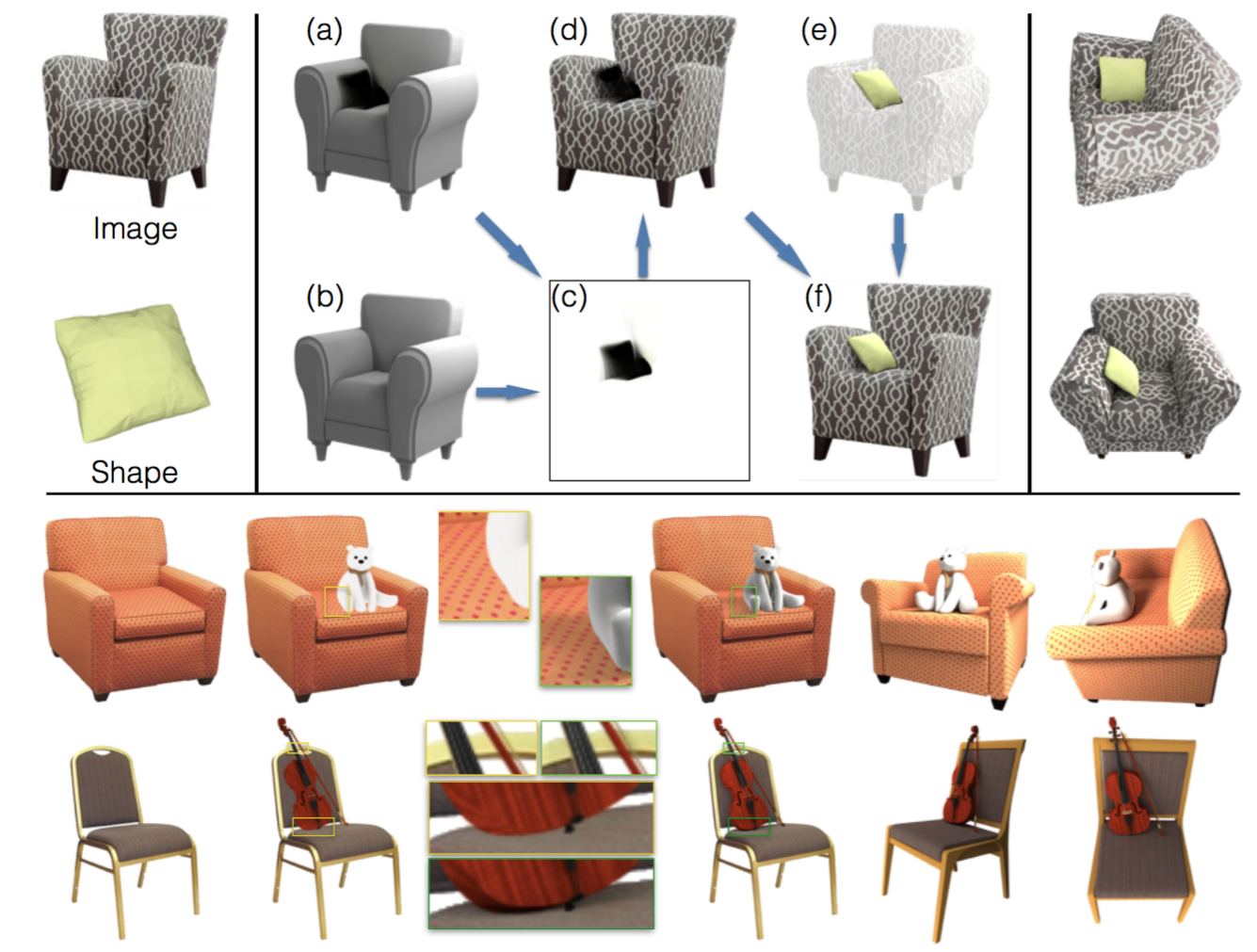

Pipeline overview. Our system takes an input image (a). A geometrically similar shape is then retrieved (b1). According to the estimated geometry we find large patches on the image (b2) and detect their correspondences (b3). The geometry also helps to factor out shading (c) and reflectance (d), so that base textures (e) are extracted with little distortion and homogeneous lighting. Final texture transfer can be applied to the retrieved model (f).

Texture transfer results

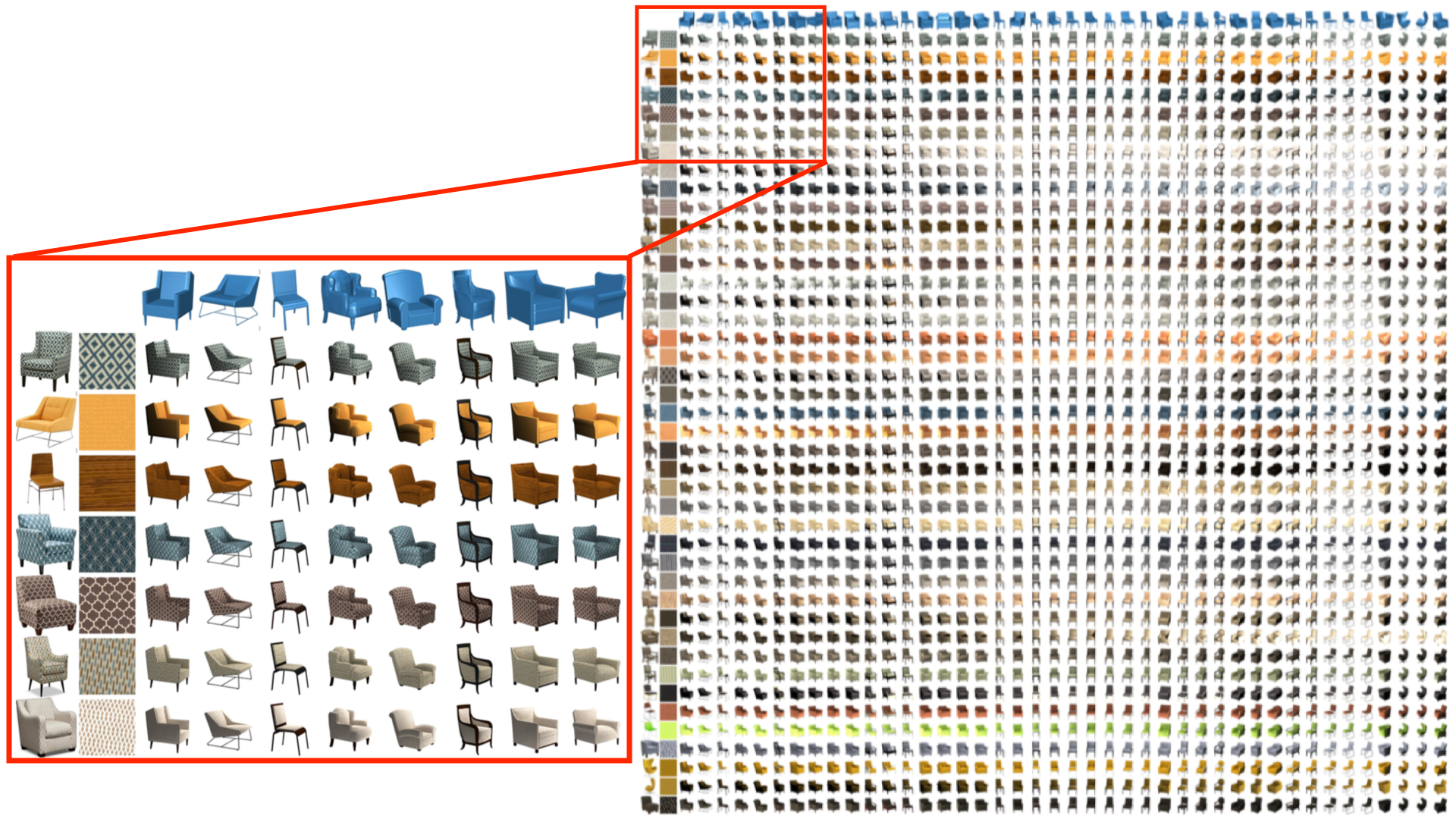

Image-to-shape and shape-to-shape texture transfer results for ‘cushion’ and ‘table’ classes.

Texture transfer results for other categories. First row: input image. Second row: retrieved shape and extracted texture patch. Last row: re-rendered image with our texture transfer output and estimated illumination. Please note that the corresponded shapes differ from the input images, such as the brim of the cap, handle of the mug, top of the vase, and wings of the aeroplane.

The results (3D textured models along with recovered illumination setting) are also available for download as supplementary material.

Evaluation

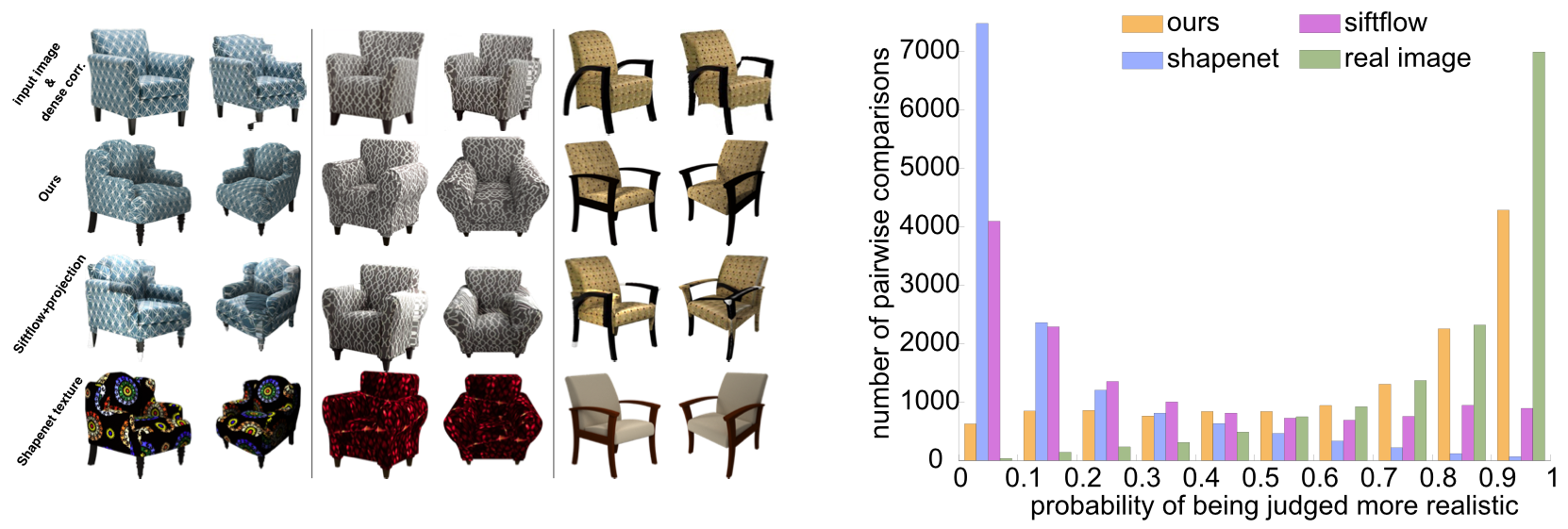

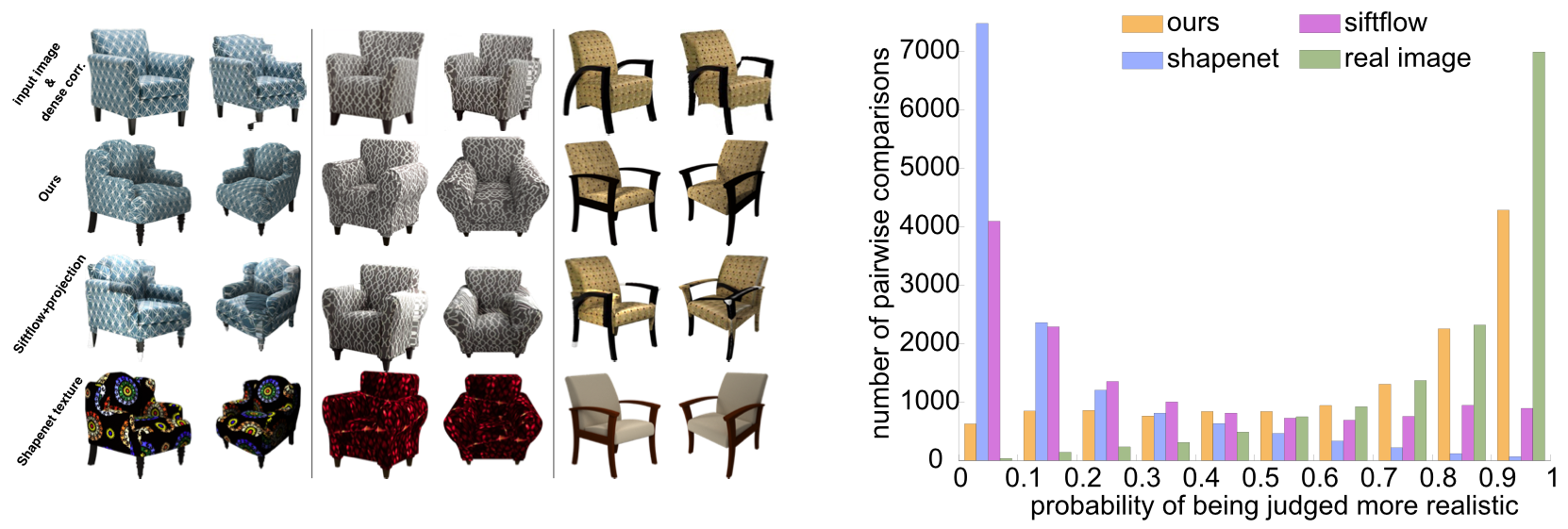

Left figure: Baseline examples. First row: input image (left), after applying SIFTflow (right); second row: our results; third row: SIFTflow + projection; fourth row: ShapeNet textures.

Right figure: Probability of rating each image winning against each other image as computed based on the Bradley-Terry model [Hunter 2004]. Note that our results are consistently rated as realistic by the users.

Applications

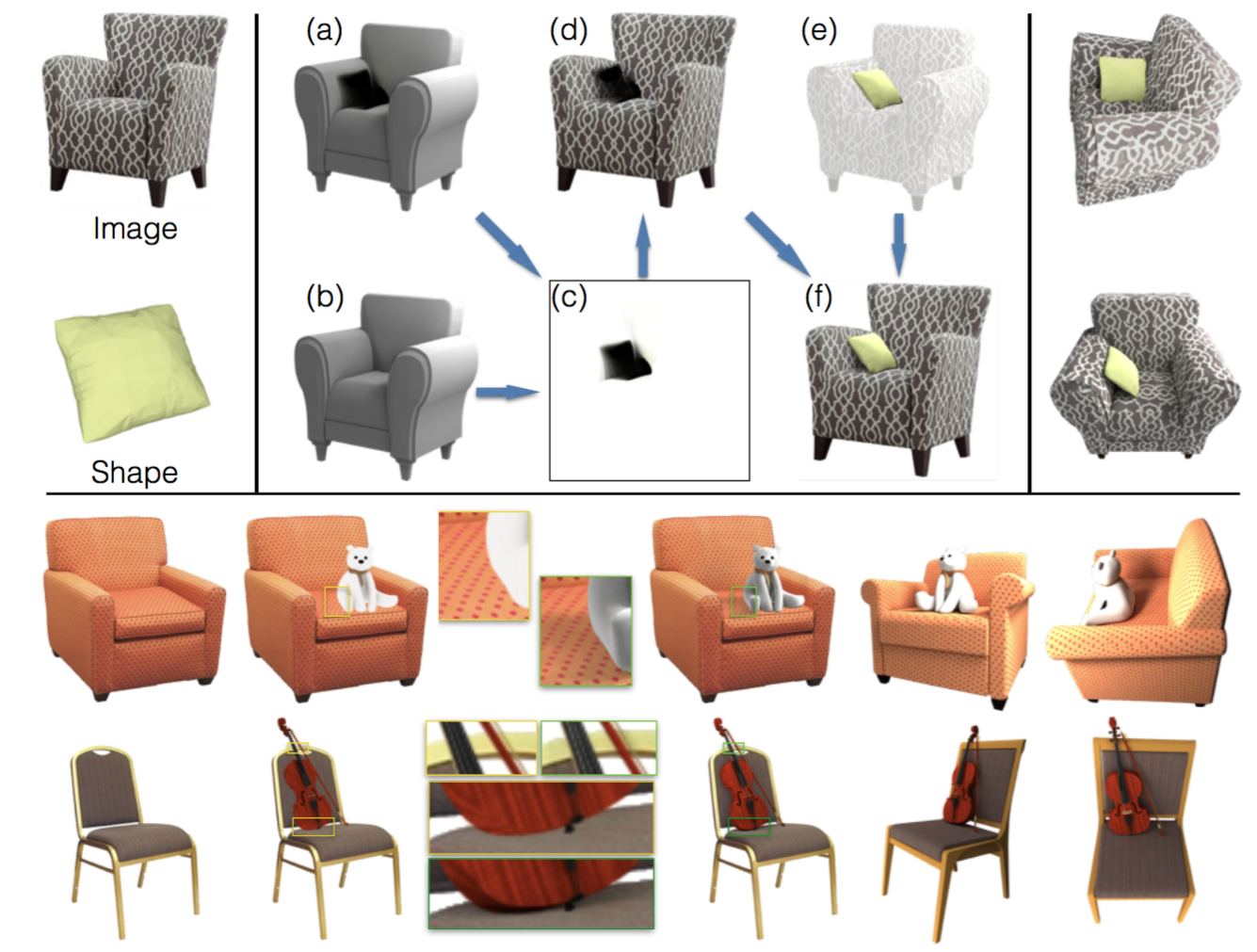

Image editing: Insert new object to the scene. We use the estimated illumination to realistically insert novel objects into the input image. Again, geometry from the retrieved shape helps to estimate shadowing effects. The above figure shows a few examples. Note that unlike state-of-the-art object insertion methods [Zheng et al. 2012; Kholgade et al. 2014], the above workflow only requires the user to specify the position of the inserted object, while the rest of the steps are automatic.

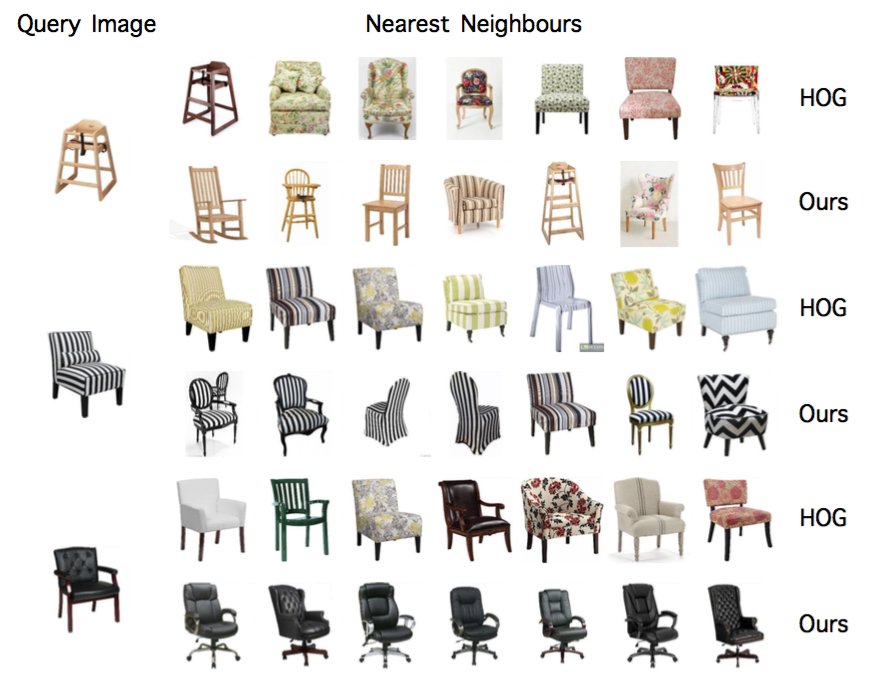

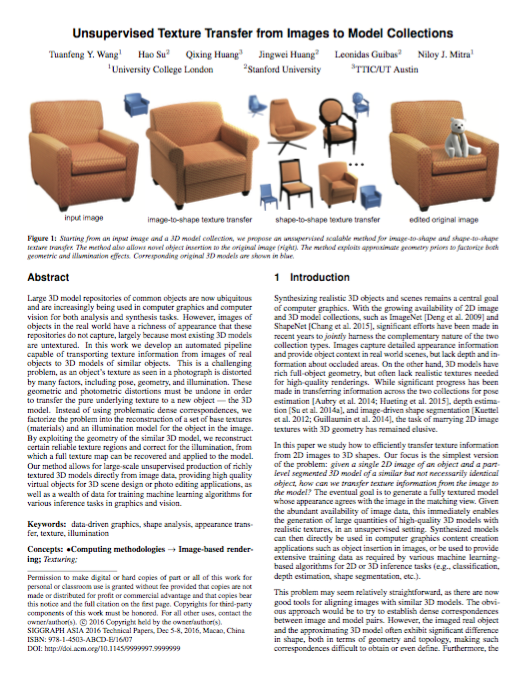

Texture-guided image retrieval. We also train an image retrieval system focusing on texture similarity but agnostic to other nuisances. The above figure shows examples of texture-guided image retrieval.

Bibtex

@article{Wang:2016:UTT:2980179.2982404,

author = {Wang, Tuanfeng Y. and Su, Hao and Huang, Qixing and Huang, Jingwei and Guibas, Leonidas and Mitra, Niloy J.},

title = {Unsupervised Texture Transfer from Images to Model Collections},

journal = {ACM Trans. Graph.},

volume = {35},

number = {6},

year = {2016},

issn = {0730-0301},

pages = {177:1--177:13},

articleno = {177},

numpages = {13},

url = {http://doi.acm.org/10.1145/2980179.2982404}

}

Acknowledgements

We thank the reviewers for their comments and suggestions for improving the paper. We thank George Drettakis for helpful discussion, Paul Guerrero for helping with the user study, and Moos Heuting for proofreading the paper. This work was supported in part by a Microsoft PhD scholarship, the ERC Starting Grant SmartGeometry (StG-2013335373), NSF grants DMS 1521583, DMS-1521608 and DMS-1546206, UCB MURI grant N00014-13-1-0341, a Google Focused Research award, and gifts from Adobe and Intel.

Links

Paper (~80M)

Supplementary material (~100M)

Data and Code (~1.6G)