RigidFusion: RGB-D Scene Reconstruction with Rigidly-moving Objects

Yu-Shiang Wong1 Changjian Li1 Matthias Nießner2 Niloy J. Mitra1,3

1University College London

2Technical University Munich

3Adobe Research

Eurographics 2021

Abstract

Although surface reconstruction from depth data has made significant advances in the recent years, handling changing environments remains a major challenge. This is unsatisfactory, as humans regularly move objects in their environments. Existing solutions focus on a restricted set of objects (e.g., those detected by semantic classifiers) possibly with template meshes, assume static camera, or mark objects touched by humans as moving. We remove these assumptions by introducing RigidFusion. Our core idea is a novel asynchronous moving-object detection method, combined with a modified volumetric fusion. This is achieved by a model-to-frame TSDF decomposition leveraging free-space carving of tracked depth values of the current frame with respect to the background model during run-time. As output, we produce separate volumetric reconstructions for the background and each moving object in the scene, along with its trajectory over time. Our method does not rely on the object priors (e.g., semantic labels or pre-scanned meshes) and is insensitive to the motion residuals between objects and the camera. In comparison to state-of-the-art methods (e.g., Co-Fusion, MaskFusion), we handle significantly more challenging reconstruction scenarios involving moving camera and improve moving-object detection (26% on the miss-detection ratio), tracking (27% on MOTA), and reconstruction (3% on the reconstruction F1) on the synthetic dataset.

Video

Asynchronous Reconstruction

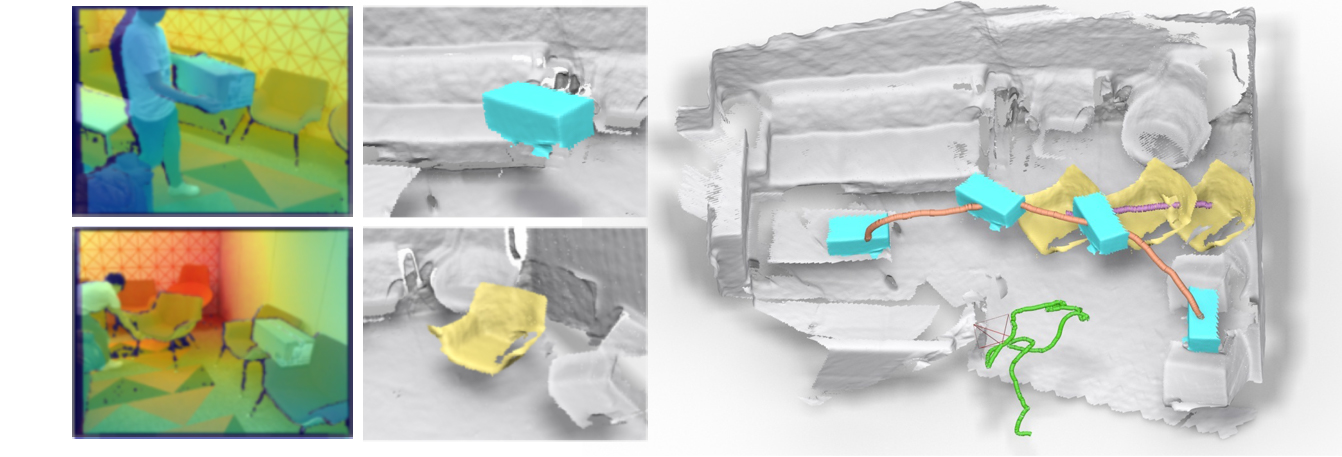

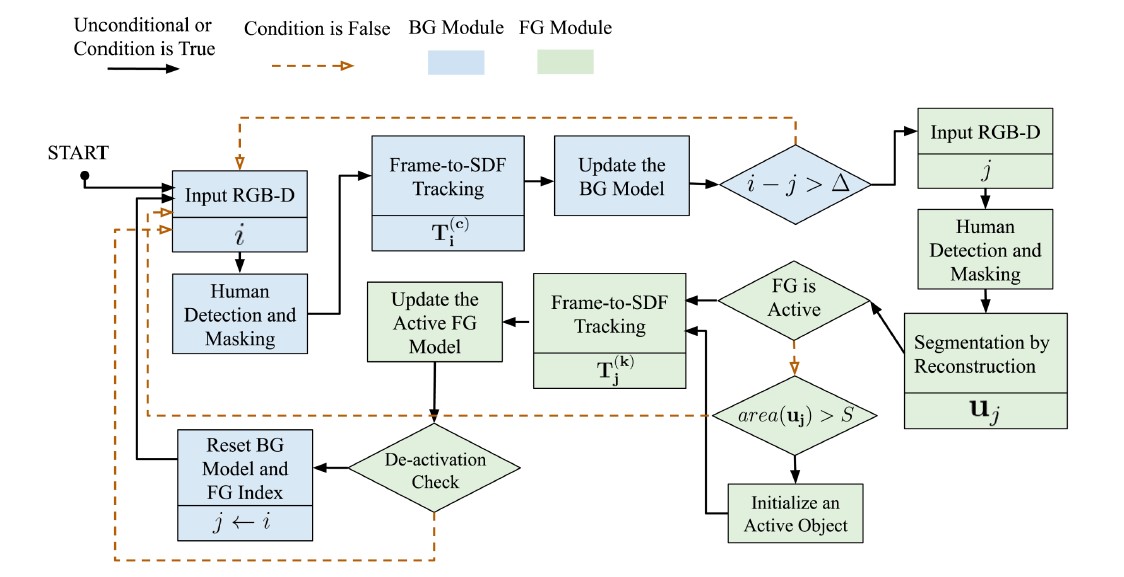

RigidFusion’s reconstruction preview and flowchart. Our method runs at 1 fps with a delay of few frames. The short delay between the background and foreground module allows the system to accumulate more free space information, which be used as the segmentation prior.

Our 4D Reconstruction Results

Our method can work on both low-dynamic and high-dynamic settings and output plausible results.

Bibtex

@article {wong2021rigidfusion,

journal = {Computer Graphics Forum},

title = {{RigidFusion: RGB-D Scene Reconstruction with Rigidly-moving Objects}},

author = {Wong, Yu-Shiang and Li, Changjian and Nie{\ss}ner, Matthias and Mitra, Niloy J.},

year = {2021},

publisher = {The Eurographics Association and John Wiley & Sons Ltd.},

ISSN = {1467-8659},

DOI = {10.1111/cgf.142651},

}

Acknowledgements

This research was supported by the ERC Starting Grant Scan2CAD (804724), ERC Smart Geometry Grant, and gifts from Adobe.