iMapper: Interaction-guided Scene Mapping from Monocular Videos

- Aron Monszpart 1

- Paul Guerrero 1

- Duygu Ceylan 2

- Ersin Yumer 3

- Niloy J. Mitra 1,2

1University College London

2Adobe Research

3Uber ATG

SIGGRAPH 2019

Abstract

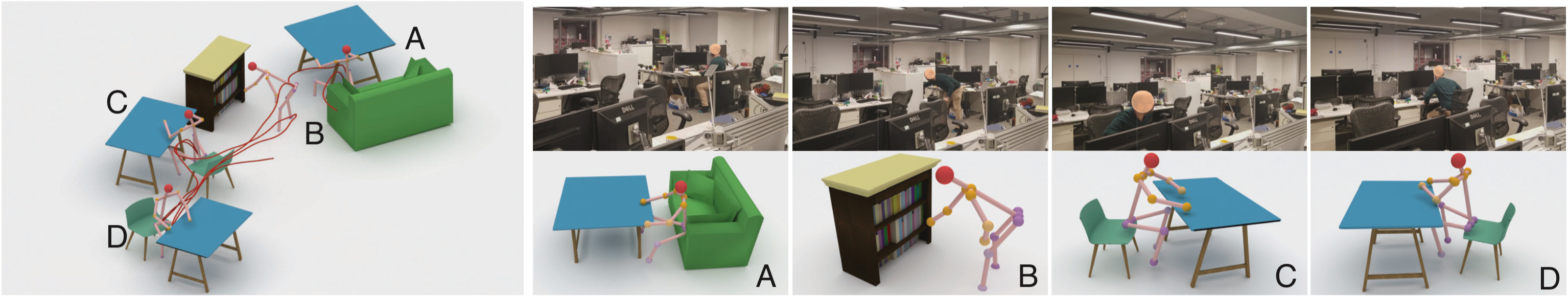

Next generation smart and augmented reality systems demand a computational understanding of monocular footage that captures humans in physical spaces to reveal plausible object arrangements and human-object interactions. Despite recent advances, both in scene layout and human motion analysis, the above setting remains challenging to analyze due to regular occlusions that occur between objects and human motions. We observe that the interaction between object arrangements and human actions is often strongly correlated, and hence can be used to help recover from these occlusions. We present iMapper, a data-driven method to identify such human-object interactions and utilize them to infer layouts of occluded objects. Starting from a monocular video with detected 2D human joint positions that are potentially noisy and occluded, we first introduce the notion of interaction-saliency as space-time snapshots where informative human-object interactions happen. Then, we propose a global optimization to retrieve and fit interactions from a database to the detected salient interactions in order to best explain the input video. We extensively evaluate the approach, both quantitatively against manually annotated ground truth and through a user study, and demonstrate that iMapper produces plausible scene layouts for scenes with medium to heavy occlusion.

Video

Bibtex

@ARTICLE{MonszpartEtAl:iMapper:Siggraph:2019,

author = {Aron Monszpart and Paul Guerrero and Duygu Ceylan and Ersin Yumer and Niloy {J. Mitra}},

title = {{iMapper}: Interaction-guided Scene Mapping from Monocular Videos},

journal = {{ACM SIGGRAPH}},

year = 2019,

month = july

}

Acknowledgements

This project was supported by an ERC Starting Grant (SmartGeometry StG-2013-335373), ERC PoC Grant (SemanticCity), Google Faculty Awards, Google PhD Fellowships, Royal Society Advanced Newton Fellowship and gifts from Adobe. We also thank Sebastian Friston, Tuanfeng Y. Wang, Moos Hueting, Drew MacQuarrie, Denis Tomè, Dushyant Mehta, Grégory Rogez, Manolis Savva, Veronika Monszpart-Benis and Krisztian Monszpart for their help and advice.