Neural Semantic Surface Maps

Luca Morreale 1 Noam Aigerman2,3 Vladimir G. Kim2 Niloy J. Mitra1,2

1University College London

2 Adobe Research

3 University of Montreal

Abstract

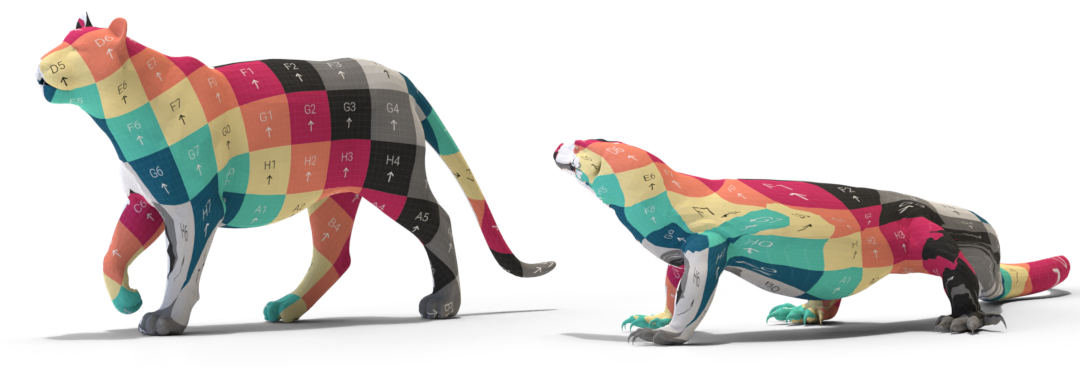

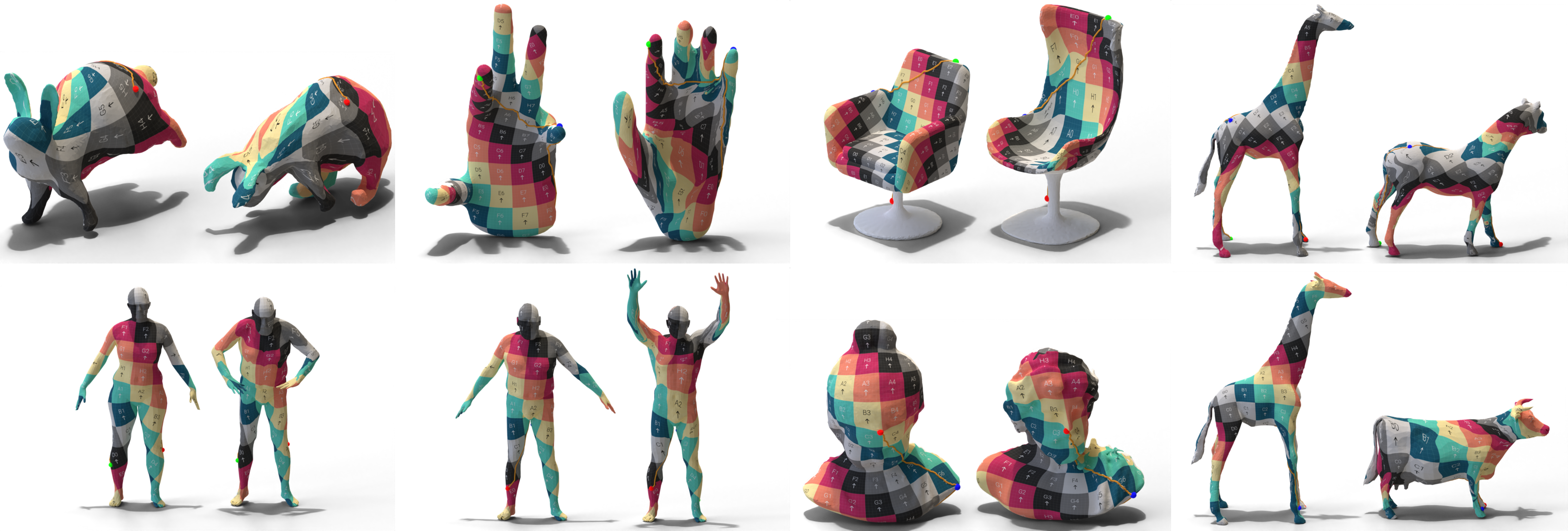

We present an automated technique for computing a map between two genus-zero shapes, which matches semantically corresponding regions to one another. Lack of annotated data prohibits direct inference of 3D semantic priors; instead, current state-of-the-art methods predominantly optimize geometric properties or require varying amounts of manual annotation. To overcome the lack of annotated training data, we distill semantic matches from pre-trained vision models: our method renders the pair of untextured 3D shapes from multiple viewpoints; the resulting renders are then fed into an off-the-shelf image-matching strategy that leverages a pre-trained visual model to produce feature points. This yields semantic correspondences, which are projected back to the 3D shapes, producing a raw matching that is inaccurate and inconsistent across different viewpoints. These correspondences are refined and distilled into an inter-surface map by a dedicated optimization scheme, which promotes bijectivity and continuity of the output map. We illustrate that our approach can generate semantic surface-to-surface maps, eliminating manual annotations or any 3D training data requirement. Furthermore, it proves effective in scenarios with high semantic complexity, where objects are non-isometrically related, as well as in situations where they are nearly isometric.

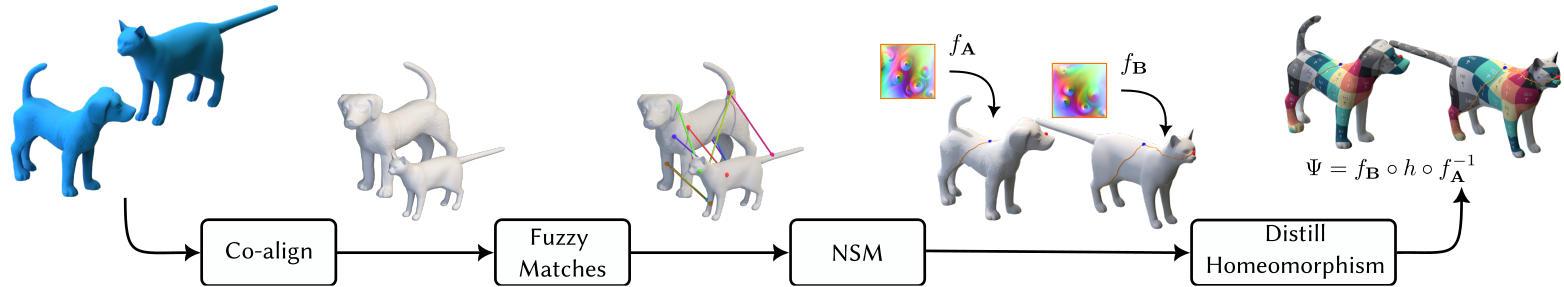

Method overview

Starting from a pair of upright genus-zero surfaces, we automatically distill an inter-surface map from a set of fuzzy matches. First, we align the input shapes, then extract a set of fuzzy matches through DinoV2 semantic visual features. We use these features to independently cut the two meshes and then optimize a (seamless) map between them.

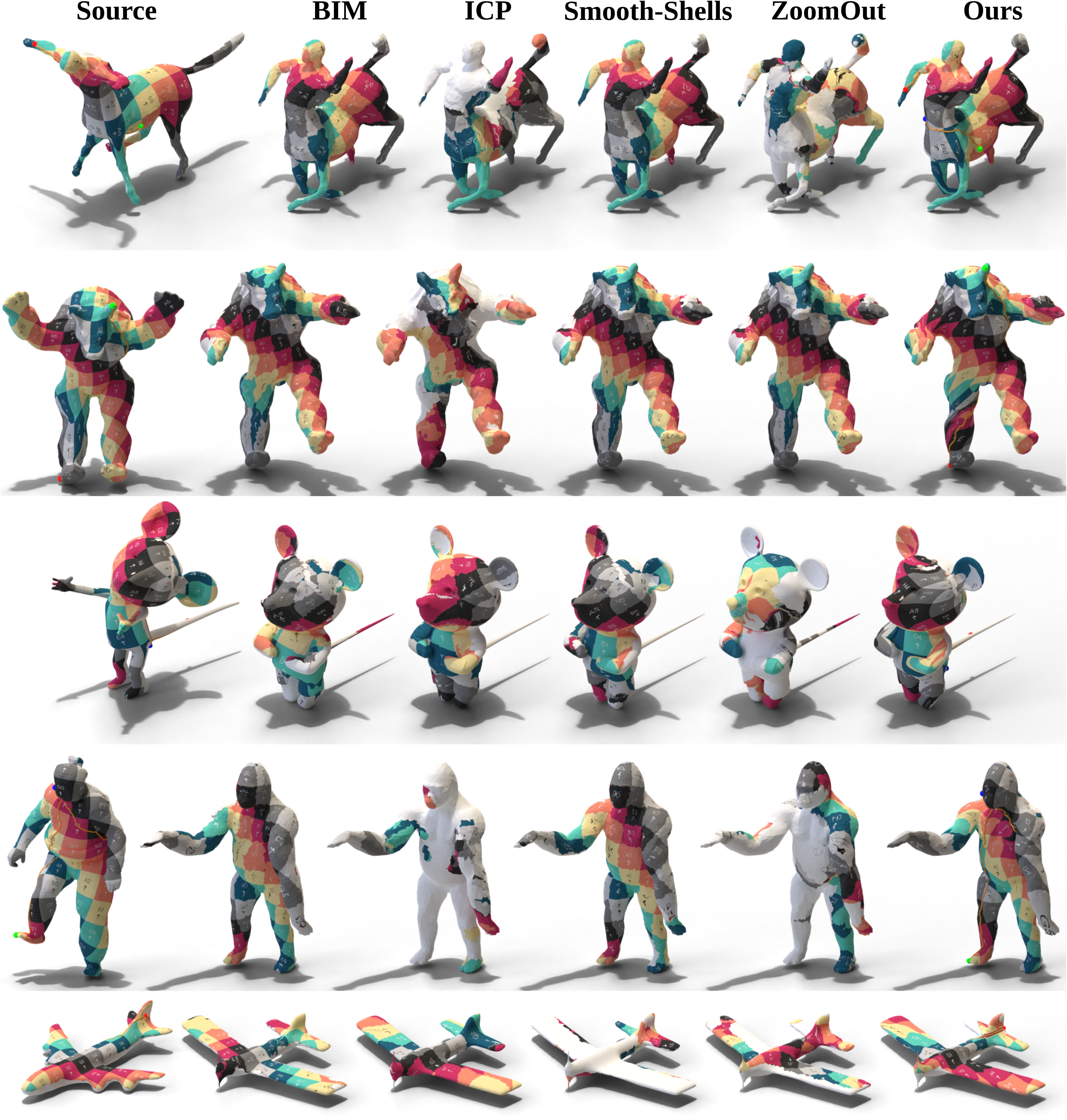

Comparisons

Here we texture only the visible region of the source model, leaving the rest white, and map it to the target shape with different techniques.

| FAUST | SHREC07 | SHREC19 | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Inv ↓ | Bij ↓ | Acc ↓ | Inv ↓ | Bij ↓ | Acc ↓ | Inv ↓ | Bij ↓ | Acc ↓ | |

| ICP | 0.06 | 0.17 | 0.25 | 0.09 | 0.65 | 0.23 | 0.07 | 0.75 | 0.15 |

| BIM | 0.09 | 0.03 | 0.04 | 0.49 | 0.48 | 0.23 | 0.05 | 0.82 | 0.04 |

| Zoomout | 0.33 | 0.23 | 0.15 | 0.25 | 0.65 | 0.54 | 0.29 | 0.76 | 0.32 |

| Smooth-shells | 0.01 | 0.00 | 0.01 | 0.03 | 0.72 | 0.26 | 0.01 | 0.83 | 0.01 |

| Ours | 0.00 | 0.00 | 0.13 | 0.00 | 0.00 | 0.23 | 0.00 | 0.00 | 0.11 |

Bibtex

@article{morreale2024neural,

title={Neural Semantic Surface Maps},

author={Morreale, Luca and Aigerman, Noam and Kim, Vladimir G. and Mitra, Niloy J.},

booktitle={Computer Graphics Forum},

volume={43},

number={2},

year={2024},

organization={Wiley Online Library}

}